If you need to detect AI music, the honest answer is simple: no single method is perfect, but you can combine audio clues, metadata checks, platform signals, and detector tools to make a strong judgment. I use this practical approach in my own work as a producer and builder in Gothenburg, because hype fades fast and evidence holds up. This guide shows you how to spot AI-generated songs with less guesswork, including tracks shaped by Suno and Udio.

If you want the bigger picture on why this matters, I also recommend the future of AI in music production→. AI music is no longer a niche topic. It affects listeners, labels, creators, distributors, and rights holders who need to make faster, better decisions.

What AI-generated music is and why detection matters

AI-generated music usually means a track that a model created, assisted, or assembled rather than a human recording and arranging everything in the traditional way. That can include synthetic vocals, AI-written melodies, or hybrid tracks where a person edits model output into a finished release. The challenge is not only artistic. It is also commercial, legal, and reputational.

Detection matters because different people need different answers. A listener may want transparency. A label may want to screen submissions. A distributor may want to reduce fraudulent uploads. A creator may need proof that someone copied their voice, style, or catalog.

In practice, the question is often not “Does this sound good?” It is “Do the signals support a human origin?” That difference matters, especially when a track spreads fast on streaming platforms or social media. When you detect AI music, you are really building a case from multiple clues, not chasing a single magic test.

Common use cases for detection

You usually need detection for one of these reasons:

Why listeners, labels, and creators care

Listeners care because transparency shapes trust. Labels care because synthetic tracks can distort streams, marketing, and royalty flows. Creators care because false claims can damage credibility fast.

The BBC covered this well in its guide on spotting AI music, noting that AI vocals can sound slurred, consonants and plosives can feel off, and “ghost” harmonies may appear and disappear at random, but those are still hints, not proof (BBC News). That is the right mindset for anyone trying to detect AI music without overclaiming.

Can you reliably detect AI music?

You can often detect AI music with decent confidence, but you cannot prove it from one clue alone. I would not trust any method that promises perfect accuracy. Human listeners miss obvious synthetic traits, and AI output keeps improving.

In my own sessions, I treat detection like troubleshooting a mix. I do not make a call after one listen. I compare multiple signals, then I ask whether the evidence points in the same direction.

The limits of human detection

Most people cannot reliably identify AI songs on a first listen. That does not mean detection is impossible. It means your ears need support.

I have tested suspicious tracks in real sessions by looping a 20-second vocal section and checking where consonants fall apart. On one track, the phrasing sounded smooth at first, but the breath sounds repeated in a way a real singer usually would not repeat across lines. On another, the chorus felt polished, but the transitions between lines snapped too cleanly, like the model had stitched phrases rather than sung them naturally.

I also compare the suspect track against known human production choices. A dense pop mix with tuning, vocal alignment, and layered doubles can sound artificial without being AI-generated. That is why I look beyond the first impression and keep the judgment probabilistic.

Why false positives happen

False positives happen for three common reasons:

A human track can sound robotic because of production choices, not because a model generated it. For context on how polished human music can become, see how AI changes music quality and mastering→. If you want a wider view of why synthetic aesthetics are common now, the future of AI in music production→ shows how blurred the line has become.

How to Detect AI Music: Audio Clues and Checks

When I try to detect AI music, I start with the sound itself. Audio clues will not prove generation on their own, but they tell you whether a track deserves a deeper check. I listen for repetition, transitions, vocal behavior, and the overall shape of the performance.

The biggest clue is often not one obvious error. It is a pattern of small oddities. AI-generated songs can sound smooth in one moment and strangely detached in the next. The vocals may land on pitch but lose human intent. The arrangement may feel coherent at first, then repeat a phrase too neatly or change section energy in a way that feels assembled.

In real sessions, I check the first verse, the first chorus, and one mid-track transition. If the verses, hooks, and fills all feel equally polished but emotionally flat, I pay attention. That does not prove anything, but it tells me to keep digging.

Best ways to detect AI music in vocals

Vocals are usually the fastest place to spot problems. The BBC mentioned slurred delivery, weak consonants, and ghost harmonies, and I have heard all three in suspicious tracks. I also listen for breath placement, vibrato consistency, and whether the singer sounds emotionally committed from line to line.

Specific vocal red flags include:

If the vocals are the weak point, I loop short sections and compare them to known human performances in the same genre. That is often enough to tell me whether I should move to metadata and platform checks.

Repetitive structure and phrasing

AI-generated songs often repeat melodic or lyrical ideas with less variation than a human would. That can sound catchy at first. However, when you listen closely, you may notice the same phrase length, cadence, or hook contour returning with little development.

That matters most in long listens. I have heard tracks where the verse-to-chorus movement felt technically correct but emotionally static. Human writers tend to create small imperfections, timing shifts, and phrasing variations that make a song breathe.

Vocal artifacts and unnatural transitions

Vocal artifacts are one of the strongest clues when you try to detect AI music. You may hear clipped syllables, odd sibilance, unstable vibrato, or transitions between lines that feel too clean. Real singers often carry tiny timing differences from phrase to phrase. AI vocals can sound smoothed over.

This is where I listen twice. The first pass gives me the vibe. The second pass tells me whether the track has real performance behavior or just simulated performance behavior.

Spectral inconsistencies and over-clean production

Some AI tracks sound over-clean from top to bottom. Every element sits neatly in place, but the mix lacks depth, micro-dynamics, or believable room behavior. In contrast, human productions usually carry small imperfections in spacing, timing, and tone.

A polished mix alone means nothing. I have produced human tracks that sound extremely tight because the editing was excellent. However, when an arrangement feels sterile and the vocals lack human breath and strain, I keep the suspicion open.

Metadata and source checks

Metadata checks help you move from “this sounds odd” to “this deserves verification.” I always check file properties, upload history, and platform context before I make a call. That is especially useful when a track sounds synthetic but could still be human-made.

Metadata will not prove AI generation by itself. Still, it can expose gaps. No writer credits, no real artist footprint, inconsistent upload timelines, or sudden jumps in output all make me cautious.

I also look at where the track appears first. If a song surfaces on a new channel with no history, no social proof, and no performance trail, I treat it differently than a track from an established artist. That broader context often matters more than the audio file alone.

For adjacent context on how AI-based analysis can help in audio work, I also use AI audio analysis for mixes→ as a reference point. The same mindset applies here: use data to support your ears, not replace them.

File properties and upload history

When I inspect a suspicious file, I look for:

A clean file with weak provenance is not proof of AI. It is a signal to keep looking.

Platform clues from YouTube, Spotify, and social media

Platform context can be revealing. A song with no live performance clips, no rehearsal footage, and no meaningful social presence deserves more scrutiny than a track backed by years of posts and gig history. The BBC’s reporting on suspicious AI acts also pointed to minimal social footprints, no interviews, and no live evidence as useful indicators, especially when combined with other oddities.

That is why I check:

If the artist exists only as a polished image and a handful of uploads, I slow down. That alone does not prove AI, but it changes the confidence level.

Best tools to detect AI-generated music

Tools help, but they do not settle the case. I treat every detector as supporting evidence. If a tool says “likely AI” but the audio and provenance look human, I do not stop there. If the tool agrees with the clues, I take the result more seriously.

Best AI music detector tools

The market is still evolving, but a few tools are worth knowing because they give different kinds of signals.

#### AI Music Detector by AHA Music

AHA Music’s AI Music Detector uses ACRCloud technology and claims high precision across full tracks and isolated components. It returns probability scores for the overall track, vocals, and accompaniment separately. That breakdown is useful because it shows where the suspicion comes from instead of giving only a blunt yes/no result.

#### AI Song Checker by SubmitHub

SubmitHub’s AI Song Checker lets you paste a link or upload a file and get a fast result. The service markets itself as free and “mostly accurate,” which is the right level of caution. I like that it is easy to use for quick screening, but I would never treat one result as final proof.

#### DeepMatch for music usage audits

DeepMatch is better understood as part of a usage-audit workflow than as a magic detector. It helps teams track matching, attribution, and usage patterns, which can matter when you need to investigate suspicious distribution or reuse behavior.

#### Free AI Music Detector by letssubmit.com

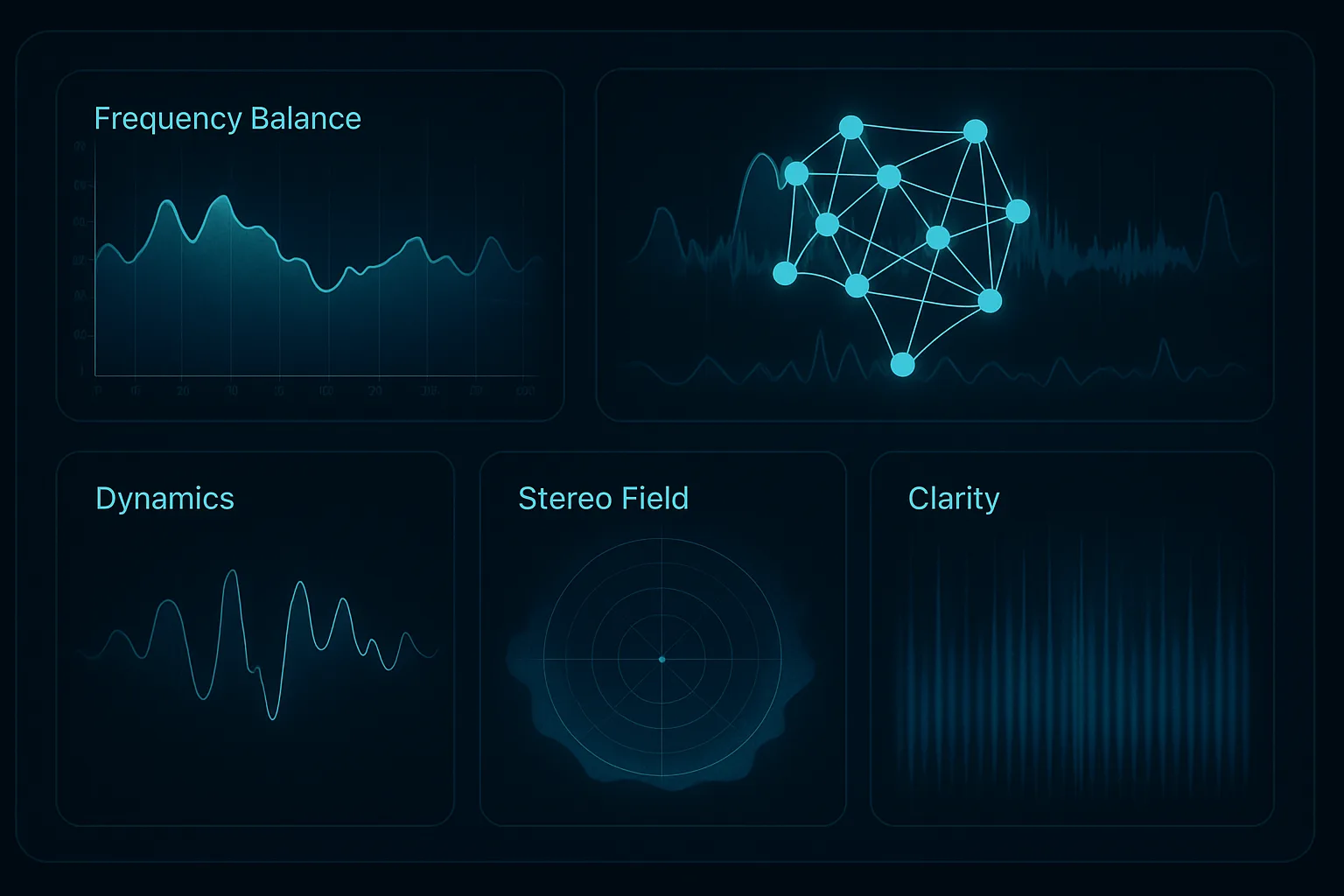

The free letssubmit.com checker analyzes 72 audio features, including MFCCs, spectral contrast, chroma features, and rhythmic patterns. It is trained on thousands of songs from AI generators like Suno and Udio, which makes it a relevant first-pass tool when you want to detect AI music quickly.

However, every one of these tools has limits. They can strengthen a case, but they cannot replace context, human listening, or provenance checks.

Manual workflow to verify a suspicious track

When a track looks suspicious, I follow the same workflow every time. This keeps me from overreacting to one clue and helps me defend the conclusion later if I need to.

Step 1: Listen for audio red flags

I start with a focused listen. I check the intro, a vocal verse, a chorus, and one transition. I listen for repetition, unnatural phrasing, synthetic vocal artifacts, and over-clean production.

If the track raises flags, I loop the suspect section and compare it with a known human reference in the same genre. If the vocal phrasing feels too smooth or the harmonic movement feels pasted together, I write that down before I move on.

Step 2: Check metadata and provenance

Next I verify the file and the upload trail. I check credits, timestamps, descriptions, and whether the artist has a believable history. If I can find the same track on multiple platforms, I compare the earliest upload and look for consistency in naming and metadata.

A few things matter most here:

Step 3: Compare against known AI model patterns

Then I compare the track against known AI patterns. This is where I think about the usual weaknesses: overly smooth vocals, repeating phrases, strange harmonies, and transitions that feel stitched together. I also ask whether the song sounds like a generic prompt result rather than a specific creative performance.

This is not about forcing a match. It is about asking whether the clues resemble a model-generated workflow.

Step 4: Use a detector as supporting evidence

Finally, I run the track through a detector tool. I use the result as one more signal, not the final answer. If the tool, the audio clues, and the metadata all point the same way, I take the suspicion seriously.

If you want to go one layer deeper on analysis, AI audio analysis for mixes→ is a useful adjacent read. The same discipline applies whether you are evaluating a mix or a generated song: evidence first, assumption second.

How creators and rights holders should respond

Your response should match the strength of the evidence. Not every suspicious track deserves a takedown notice. Not every odd vocal line means fraud. Calm escalation works better than emotional reactions.

When to ignore it

Ignore the suspicion if the track has strong human provenance, a clear artist history, and only a few weak audio clues. A highly edited pop track can sound synthetic without being AI-generated. In those cases, I log the concern but move on.

When to investigate further

Investigate further if multiple signals line up: odd vocals, thin metadata, no social trail, and a detector result that also leans suspicious. That is the point where I compare versions, archive screenshots, and document what I found. If you need broader context on why this category is growing fast, the future of AI in music production is a good companion piece.

When to escalate to takedown or legal review

Escalate when the evidence is strong and the risk is real. That usually means you have clear provenance issues, repeated suspicious uploads, or a likely rights violation. At that stage, save the files, keep timestamps, document platform links, and involve legal review if needed.

How to reduce false accusations

False accusations damage trust quickly. If you want to detect AI music responsibly, you also need to protect human creators from bad assumptions. I have heard plenty of legitimate tracks that triggered suspicion simply because they were polished, genre-blended, or heavily processed.

Human-made music that sounds synthetic

Many human tracks sound synthetic by design. EDM, hyperpop, cinematic pop, and some modern metal productions can feel hyper-quantized and ultra-clean. Vocal tuning, alignment, and sample layering can push a human performance into uncanny territory.

That is why I do not accuse based on sonic polish alone. I compare the song’s sound with the artist’s history, release pattern, and visible process. A sterile mix is a clue, not a verdict.

What evidence is actually useful

The most useful evidence usually comes from a combination of:

You want to build a case, not a theory. If the evidence is weak, say so. If the evidence is strong, document it clearly and move carefully.

FAQ about detecting AI music

Can AI music detectors be trusted?

Yes, but only as supporting tools. Detectors can help you detect AI music, especially when they agree with audio clues and metadata checks. Still, they are not proof. I treat them as one signal in a broader review, not a final verdict.

Can Spotify or YouTube detect AI music?

Platforms can use internal systems, reporting tools, and policy checks, but they do not give you a public, reliable detector you can fully trust on its own. In practice, you still need your own ears, metadata review, and platform history to make a strong judgment.

How do you tell Suno or Udio music apart?

Look for repetitive phrasing, synthetic vocals, weak breath behavior, and transitions that feel stitched together. Then compare the track’s upload history, artist footprint, and credits. Suno and Udio tracks often reveal themselves through patterns, but never rely on one clue alone.

Is metadata enough to prove AI generation?

No. Metadata can raise suspicion, but it cannot prove generation by itself. A missing credit line or vague file history may point you in the right direction, yet the strongest conclusions come from combining metadata, audio clues, platform context, and detector results.

Conclusion

If you want to detect AI music without guessing, use the full stack: audio clues, metadata checks, platform signals, and detector tools. That is the only approach I trust in real sessions. It keeps you practical, calm, and hard to fool.

The main takeaways are simple: AI vocals often leave clues, false positives happen often, metadata matters, and detector tools support but do not replace judgment. When the evidence aligns, you can act with confidence. When it does not, you should slow down and keep checking.

Use the checklist in this guide the next time a suspicious track crosses your desk, and test a few detector tools on the same file. If you apply the process consistently, you will detect AI music with far less guesswork and much better results.

FAQ about detecting AI music

Can AI music detectors be trusted?

Yes, but only as part of a broader review. They work best when their output matches audio clues, metadata checks, and platform history. If the detector says one thing and the evidence says another, the detector should not win by default.

Can Spotify or YouTube detect AI music?

They may use internal moderation or policy systems, but those are not transparent, public tools you can independently verify. For practical work, assume you still need to do your own checks. Platform signals can help, but they rarely settle the question alone.

How do you tell Suno or Udio music apart?

I listen for flattened vocal intent, repetitive melodic phrasing, and transitions that feel stitched instead of performed. Then I check whether the artist has a real trail: social accounts, live footage, credits, and consistent uploads. The pattern matters more than one isolated oddity.

Is metadata enough to prove AI generation?

No. Metadata can expose gaps, but it cannot prove authorship on its own. Missing credits, unusual timestamps, or vague upload history should trigger deeper review. The strongest cases combine metadata with audio evidence and external context.